This project is publicly available at https://github.com/WHULai/Time-Series-Classifier and builds upon the dataset from https://github.com/zamaex96/Hybrid-CNN-LSTM-with-Spatial-Attention.

I implemented a comprehensive time series classification framework that compares various deep learning and traditional machine learning models. The project provides reproducible results with fixed random seeds and automatic visualization generation.

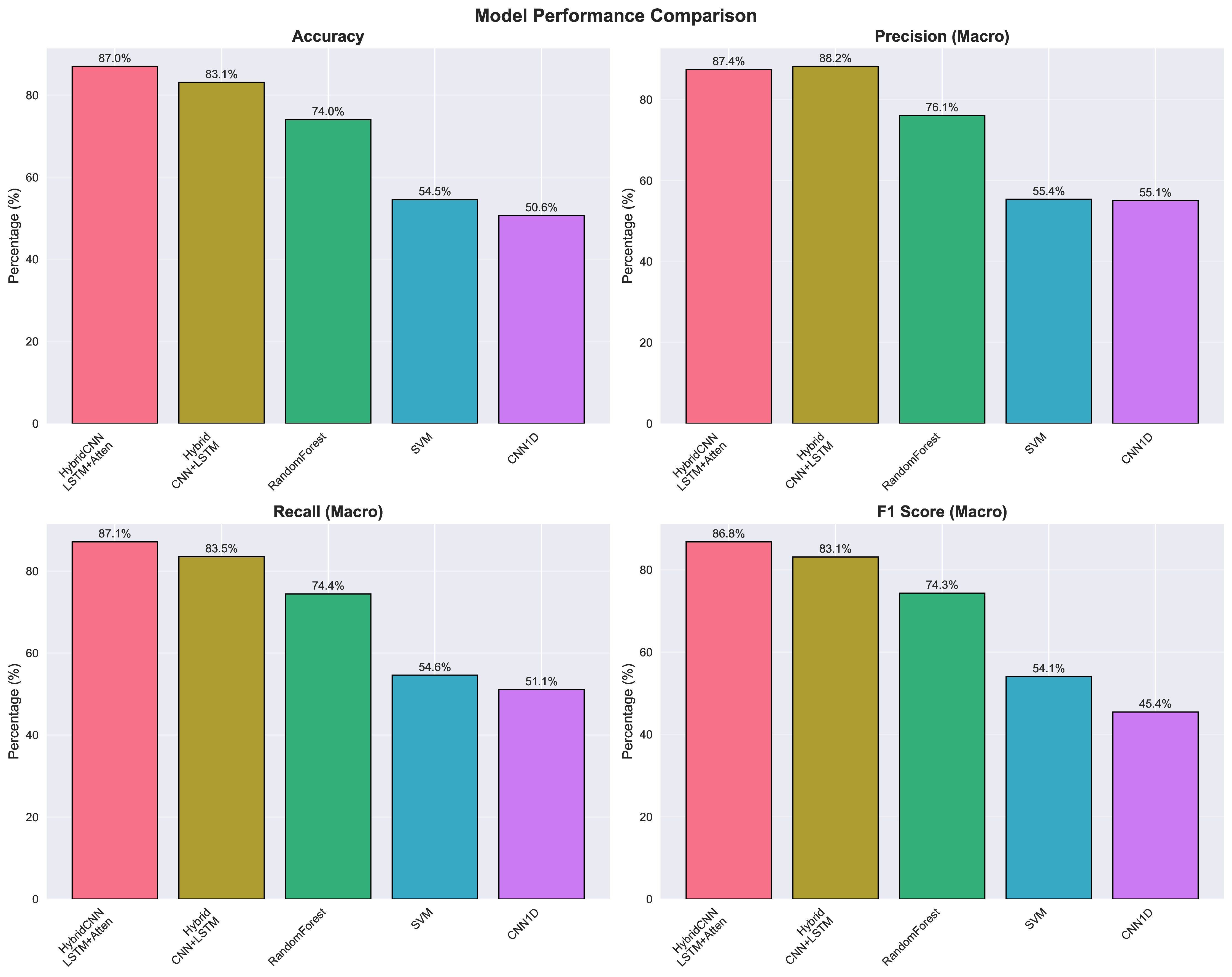

Performance Summary

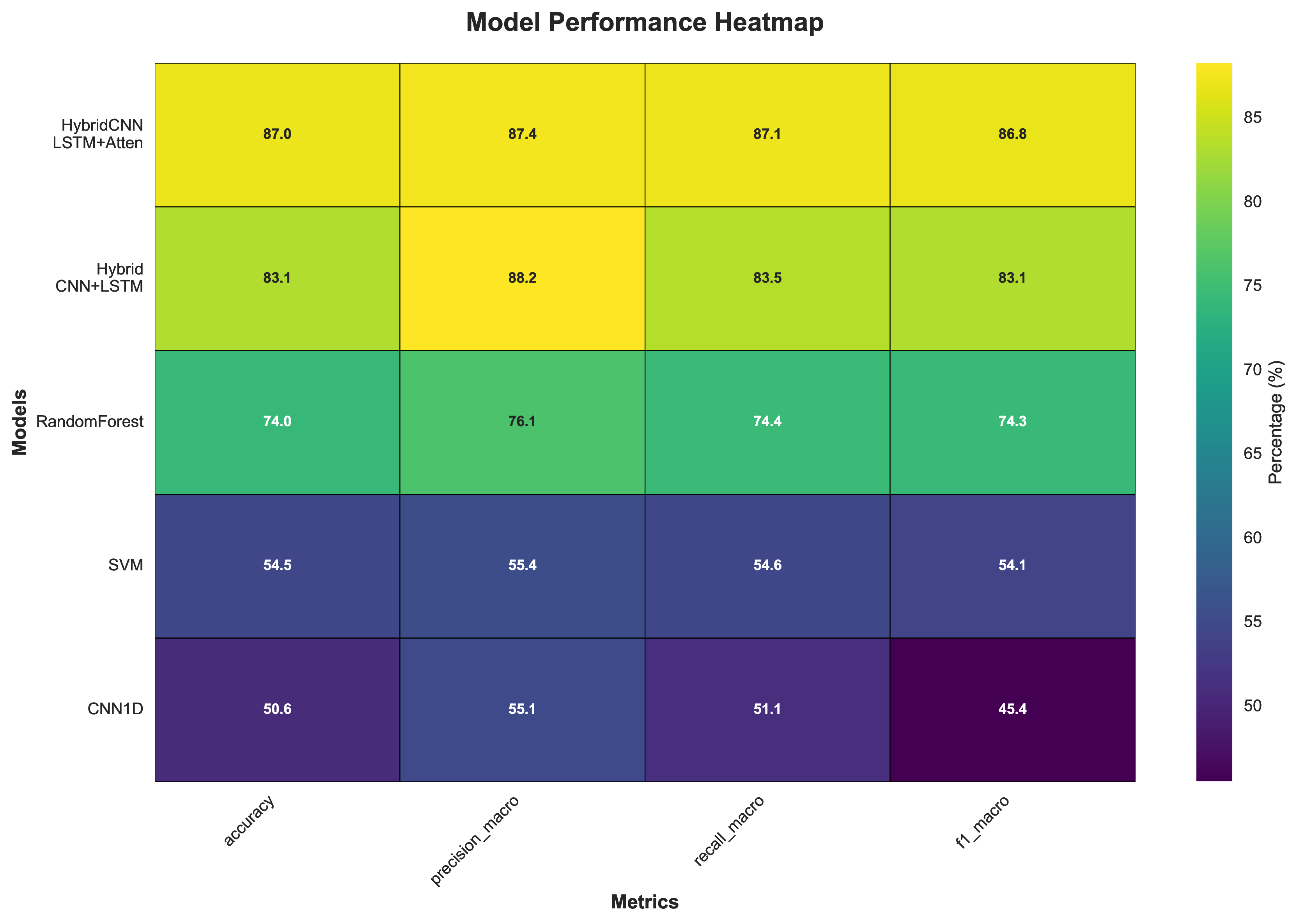

The models achieved the following performance on the test set (seed=42):

| Model | Accuracy | Precision | Recall | F1-Score | Training Time |

|---|---|---|---|---|---|

| Hybrid CNN-LSTM with Attention | 87.01% | 87.44% | 87.11% | 86.77% | ~3-5 min |

| Hybrid CNN-LSTM | 83.12% | 88.20% | 83.49% | 83.11% | ~2-3 min |

| Random Forest | 74.03% | 76.10% | 74.41% | 74.32% | ~30 sec |

| SVM | 54.55% | 55.36% | 54.61% | 54.06% | ~20 sec |

| 1D CNN | 50.65% | 55.06% | 51.12% | 45.43% | ~3-4 min |

All models trained with fixed random seed (42) for reproducibility

Project Overview

This project implements a model zoo for time series classification, including:

- Hybrid CNN-LSTM with Spatial Attention (Best: 87.01% accuracy)

- Standard Hybrid CNN-LSTM (83.12% accuracy)

- 1D Convolutional Neural Network (50.65% accuracy)

- Traditional ML baselines (Random Forest: 74.03%, SVM: 54.55%)

The framework provides unified, reproducible comparisons with automatic visualization generation.

Key Features

- Reproducible: Fixed random seeds (Python: 42, NumPy: 42, PyTorch: 42)

- Leak-free: Group-based data splitting prevents information leakage

- Modular: Clean architecture with separate modules for data, models, training, and evaluation

- Tested: Comprehensive unit tests and smoke tests

- Visualized: Automatic generation of training curves, confusion matrices, and comparison charts

Model Architectures

Hybrid CNN-LSTM with Spatial Attention

The best-performing model combines:

- CNN layers for feature extraction from time series data

- Spatial attention mechanism to highlight important temporal regions

- LSTM for sequential modeling of temporal dependencies

- Fully connected classification layer

The spatial attention mechanism uses a 1D convolutional layer followed by softmax to generate attention weights that emphasize relevant time steps.

Model Comparison Framework

All models are evaluated using a unified framework that calculates:

- Accuracy: Overall classification correctness

- Precision: Proportion of true positives among predicted positives

- Recall: Proportion of true positives among actual positives

- F1-Score: Harmonic mean of precision and recall

- Confusion matrices: Detailed per-class performance

Visualizations

The project automatically generates comprehensive visualizations for model analysis and comparison.

Model Performance Comparison

Overall performance metrics comparison across all models. Hybrid CNN-LSTM with Attention achieves the best accuracy (87.01%).

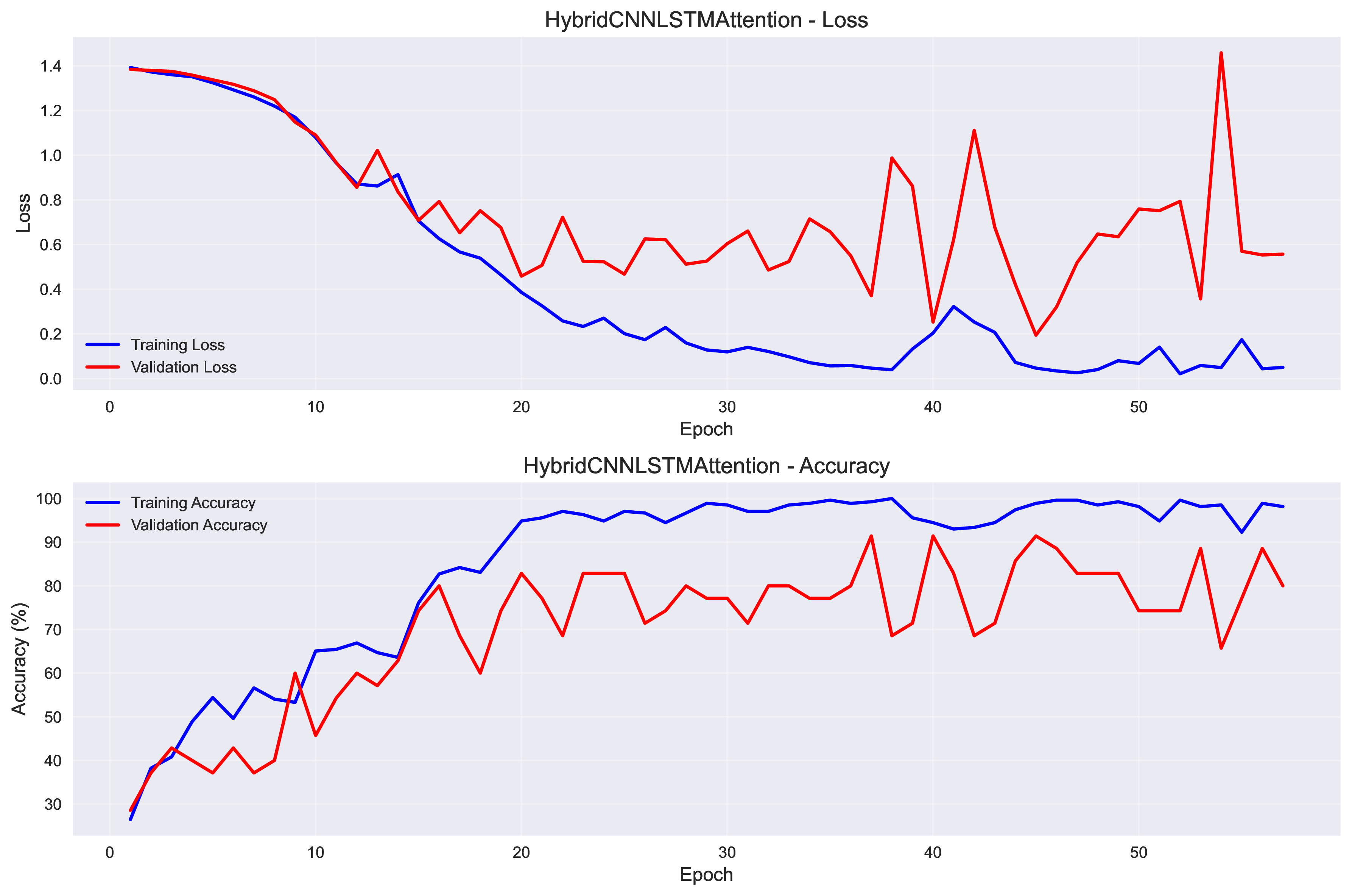

Training Progress

Best Model: Hybrid CNN-LSTM with Attention

Training and validation loss/accuracy for the best-performing model over 200 epochs. The model converges around epoch 40 with validation accuracy reaching 91.43%.

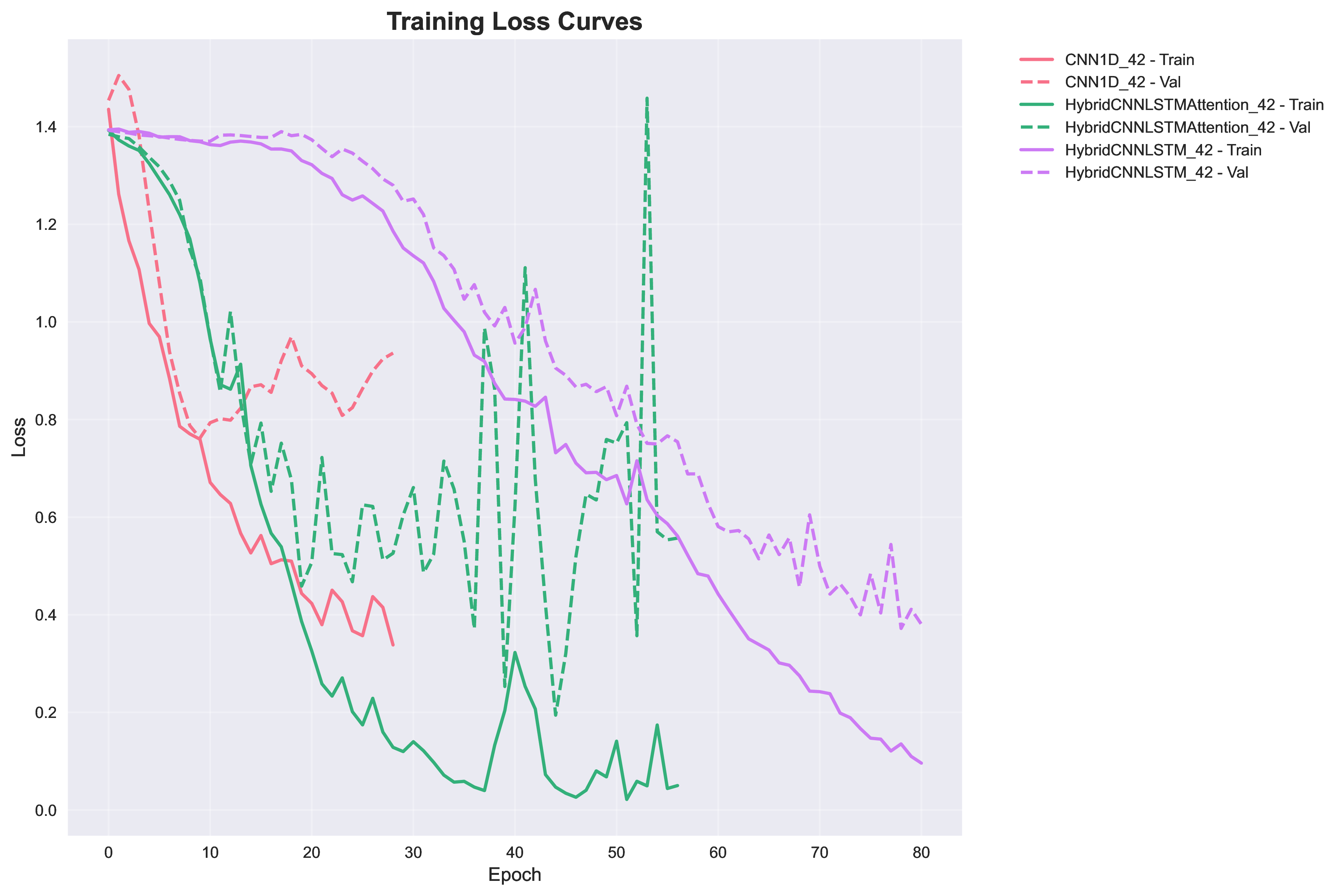

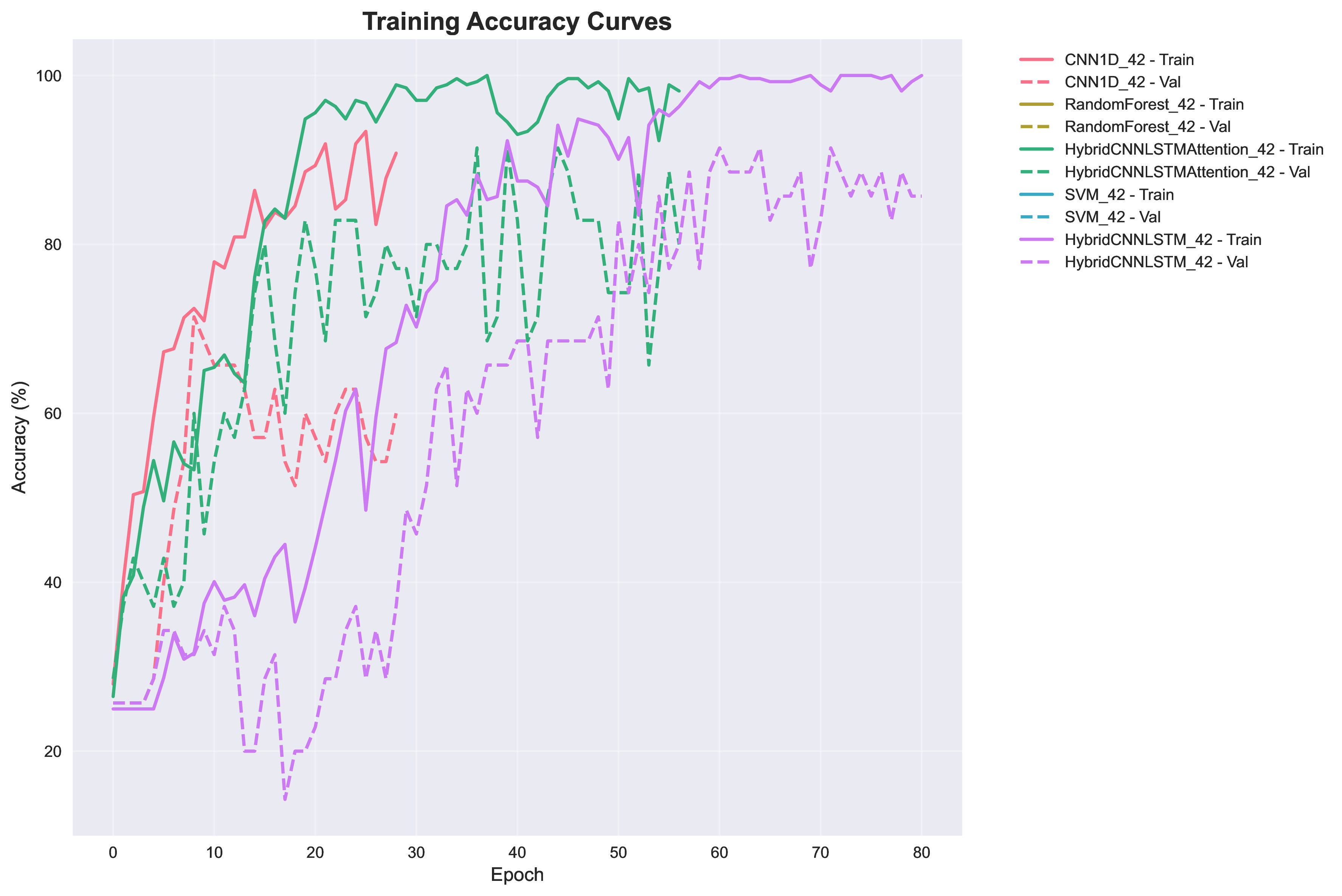

All Models Training Comparison

Comparison of training progress across all neural network models. Hybrid models show faster convergence and better final performance.

Confusion Matrices

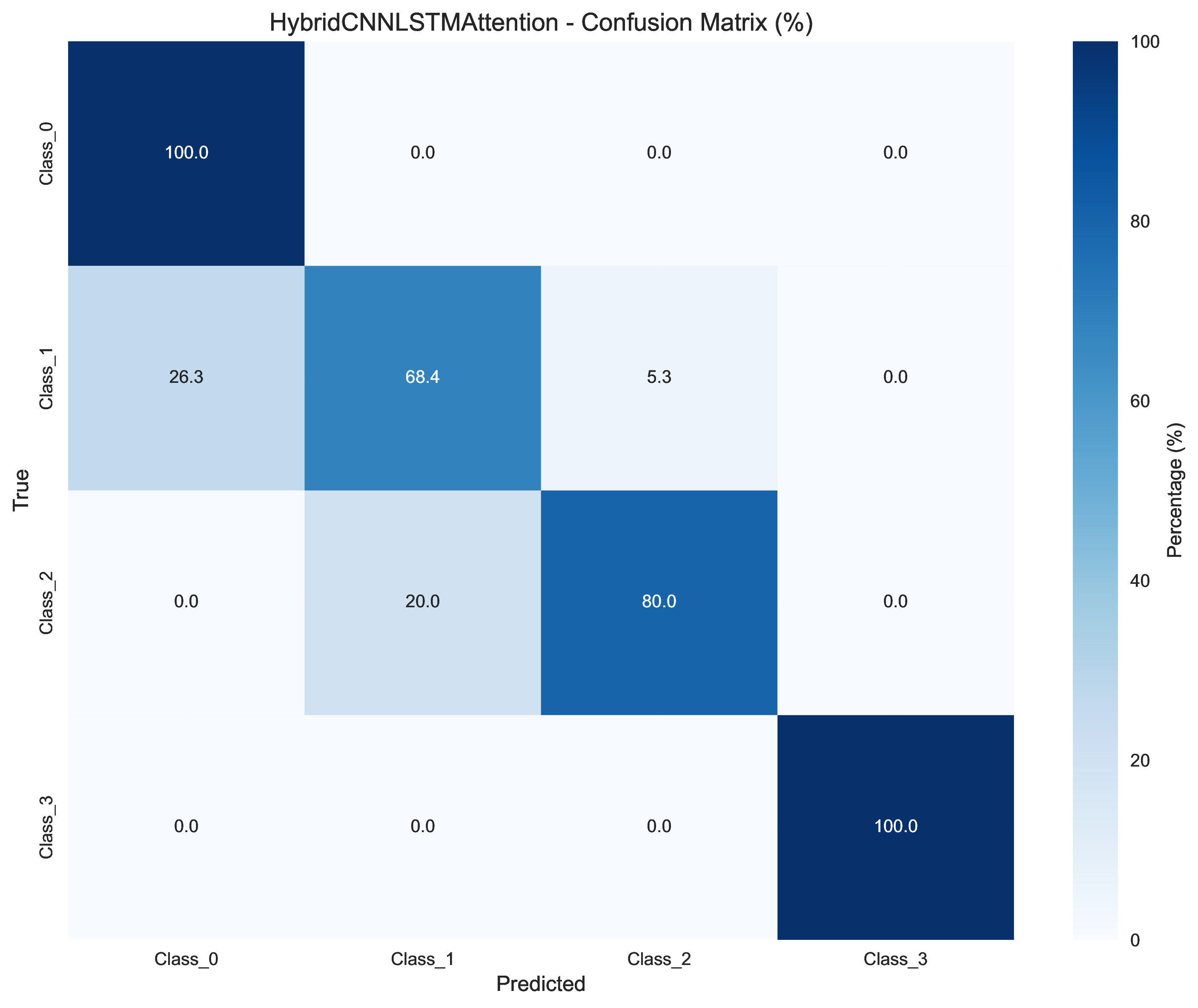

Best Model: Hybrid CNN-LSTM with Attention

Confusion matrix for the best model showing strong performance across all 4 classes, with perfect classification for class 3.

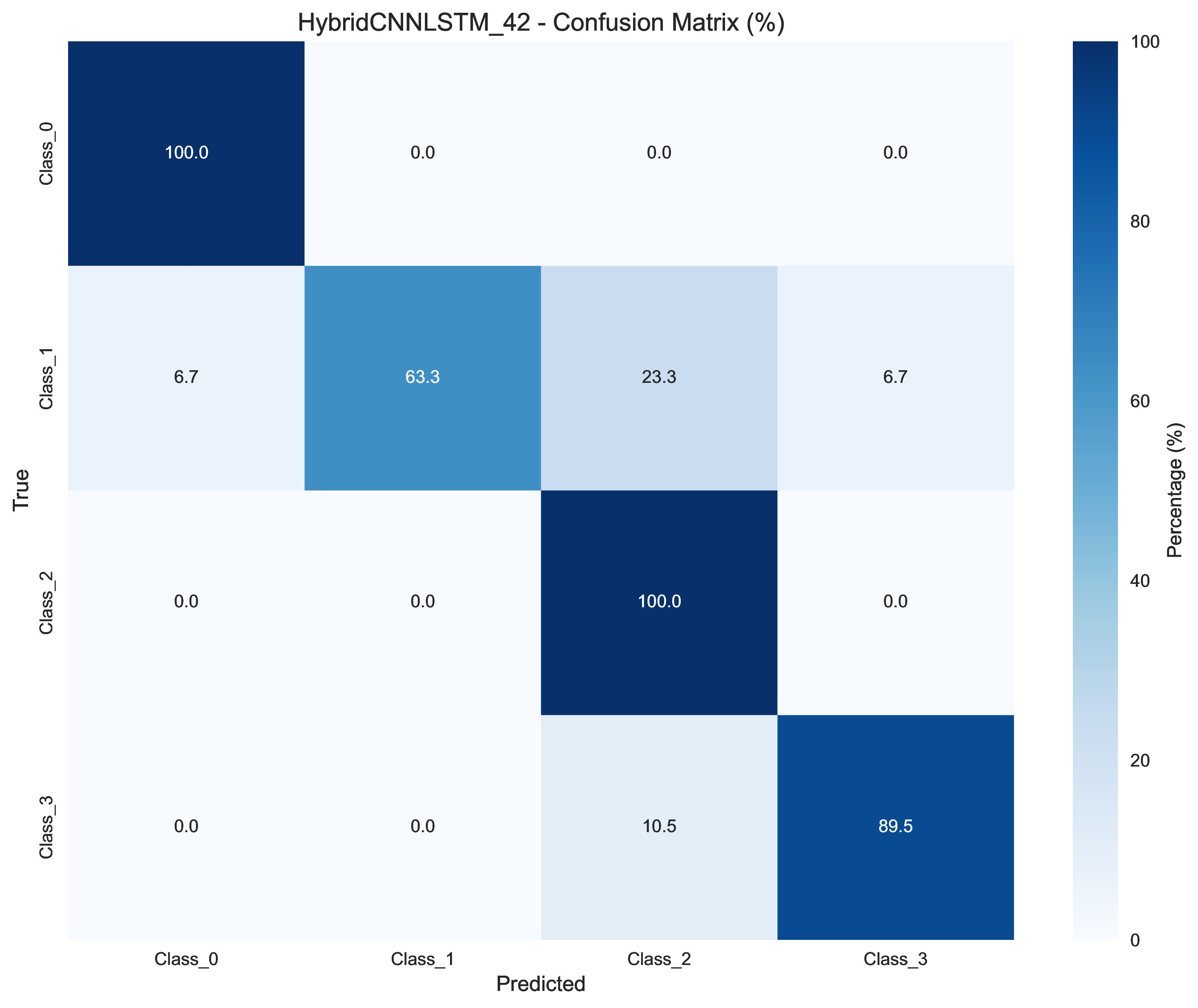

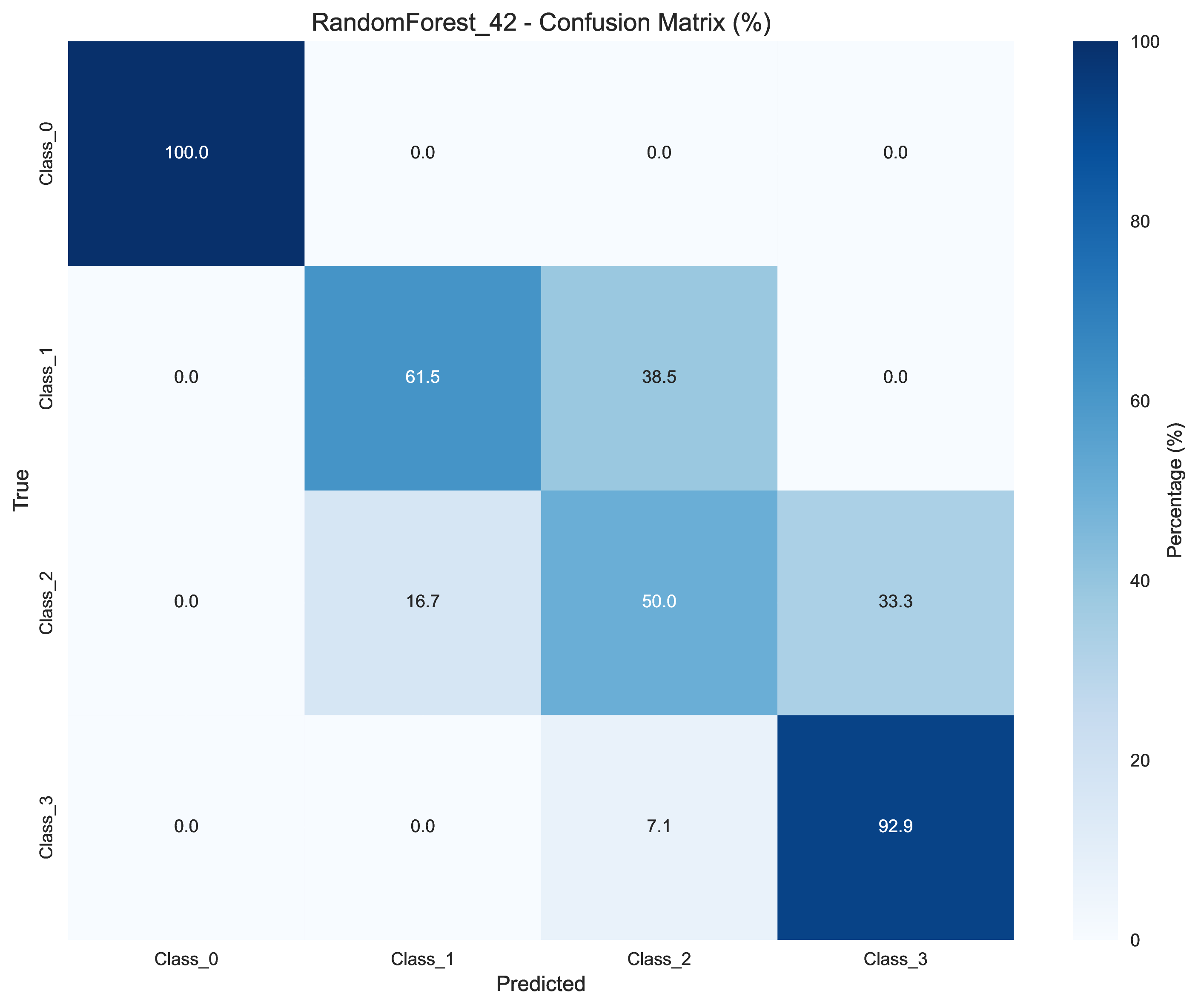

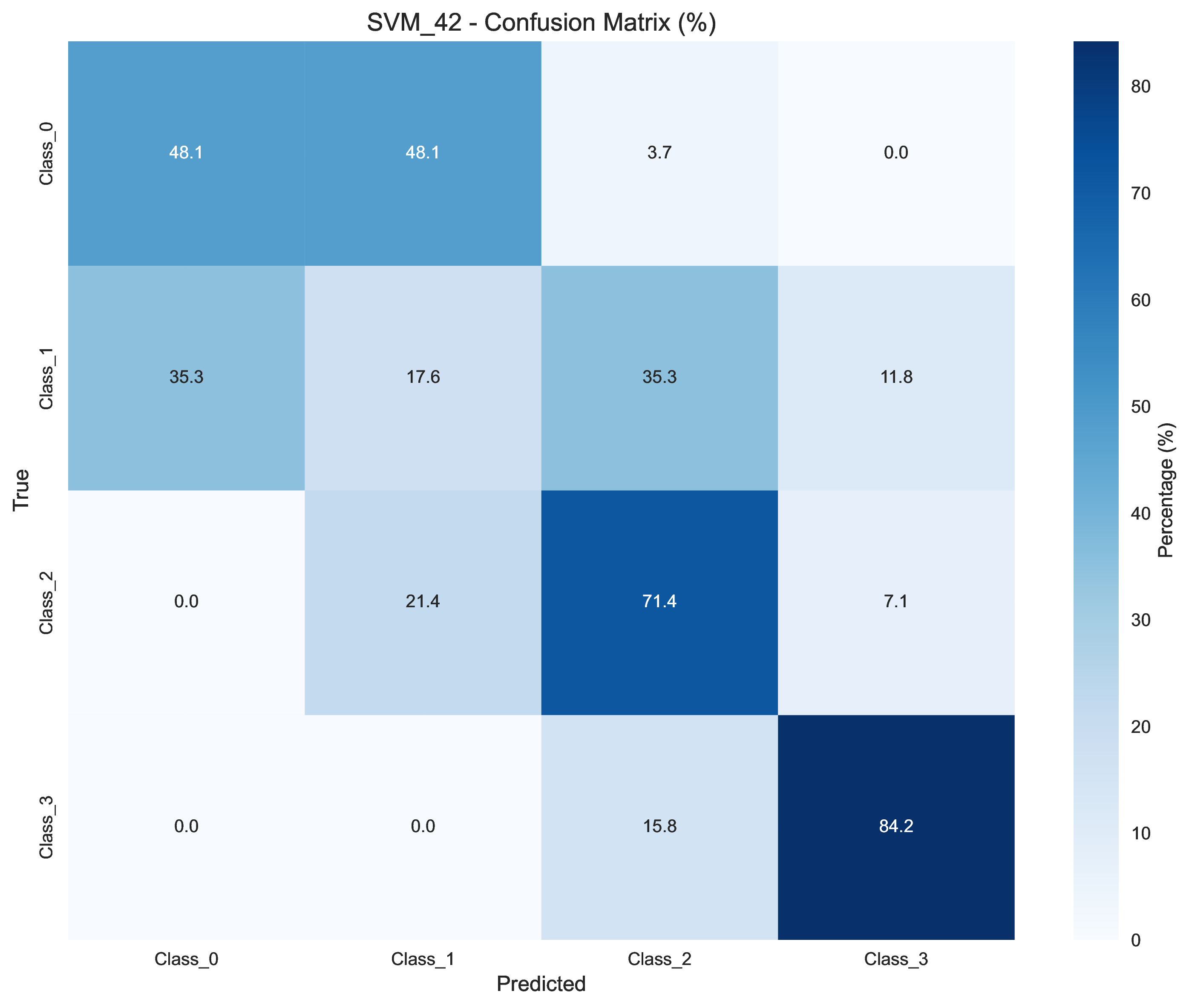

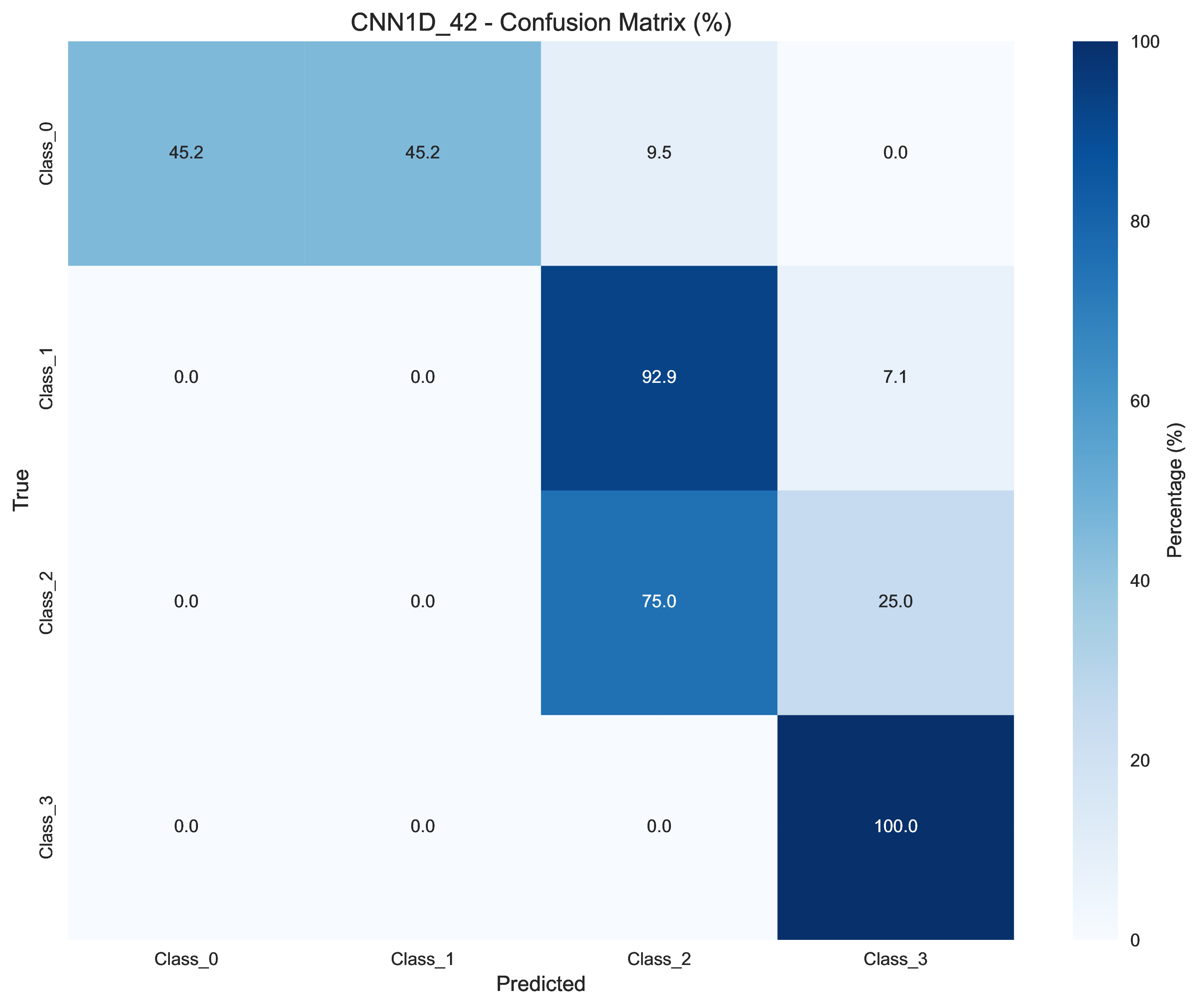

All Models Confusion Matrices

| Model | Confusion Matrix |

|---|---|

| Hybrid CNN-LSTM |  |

| Random Forest |  |

| SVM |  |

| 1D CNN |  |

Detailed Performance Analysis

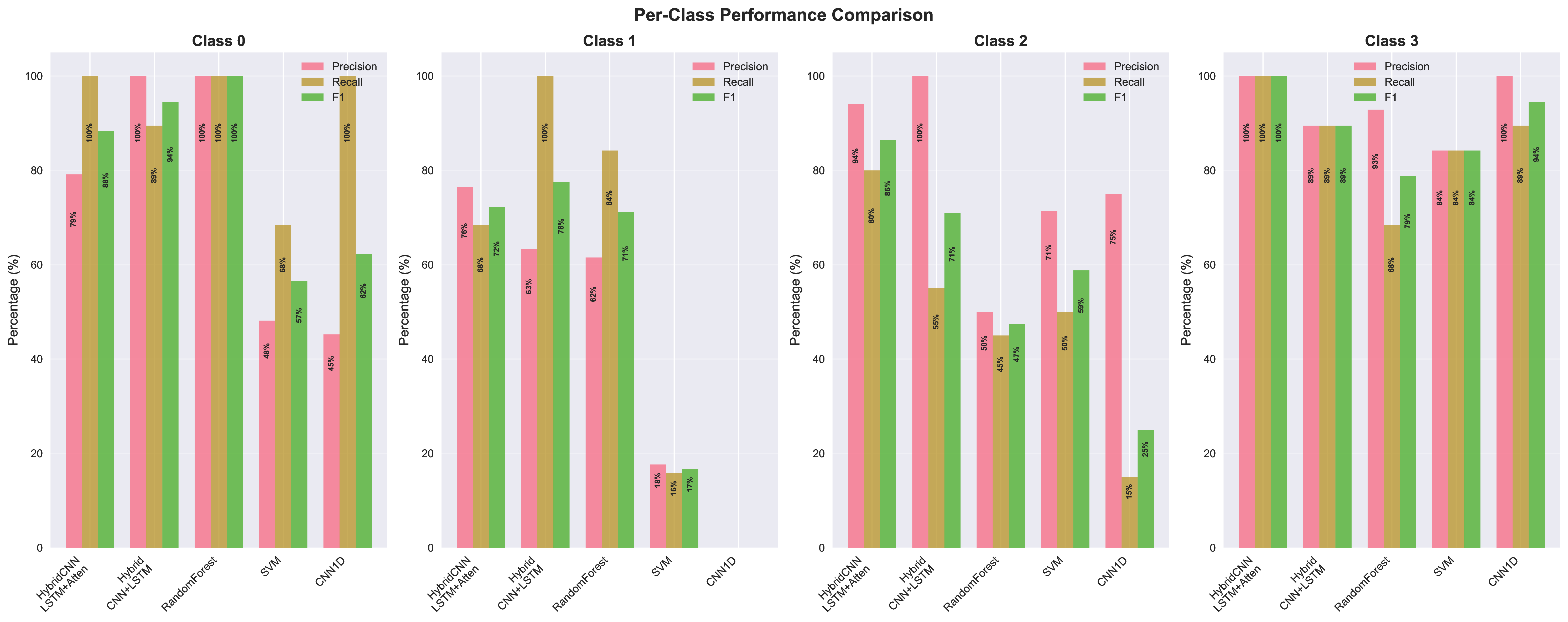

Per-Class Performance

Detailed per-class performance breakdown showing precision, recall, and F1-score for each model across all 4 classes.

Performance Heatmap

Heatmap visualization of model performance across different metrics (accuracy, precision, recall, F1-score).

Quick Start

Installation

# Create virtual environment and install

uv venv --python 3.12.12

source .venv/bin/activate

uv pip install -e .Training Models

# Train the best model (Hybrid CNN-LSTM with Attention)

./train.sh hybrid_attention

# Train other models

./train.sh hybrid_cnn_lstm

./train.sh random_forest

./train.sh cnn_1d

./train.sh svmModel Configuration

All models use YAML configuration files with parameters for:

- Data preprocessing (window size, stride, split ratios)

- Model architecture (layer sizes, attention mechanisms)

- Training (epochs, batch size, learning rate, optimizer)

Key Findings

- Hybrid architectures outperform pure models: The combination of CNN for feature extraction and LSTM for temporal modeling yields the best results

- Attention mechanism provides significant boost: Adding spatial attention improves accuracy by ~4% compared to standard Hybrid CNN-LSTM

- Traditional ML models are competitive: Random Forest achieves 74% accuracy with much faster training time

- Model convergence: Neural networks converge within 40-60 epochs, with early stopping preventing overfitting

Training Statistics

- Dataset: 7 features, 4 classes, time series data

- Window size: 50 time steps

- Stride: 10 time steps

- Train/Val/Test split: 80%/10%/10%

- Training epochs: 200 (neural networks), 1 (traditional ML)

- Batch size: 64

- Optimizer: Adam (LR=0.001) for attention model, SGD (LR=0.01) for hybrid CNN-LSTM

Code Quality

The project maintains high code quality standards:

- Type hints: All functions include type annotations

- Documentation: Comprehensive docstrings and README

- Testing: Unit tests and smoke tests for all components

- Formatting: Consistent code style with black and flake8

Extending the Framework

The modular architecture makes it easy to add new models:

- Create model class in

src/models/ - Register in

src/models/__init__.py - Create config file in

configs/ - Add unit tests in

tests/test_models.py

The framework supports both PyTorch neural networks and scikit-learn traditional models.

Performance Insights

Key Findings from Actual Training

- Hybrid CNN-LSTM with Attention achieves 87.01% accuracy, outperforming all other models

- Spatial attention provides ~4% accuracy improvement over standard CNN-LSTM

- Traditional ML models (Random Forest) achieve 74.03% accuracy with 30-second training

- Data leakage prevention through group-based splitting is essential for reliable evaluation

Training Efficiency

| Model | Training Time | Accuracy |

|---|---|---|

| Hybrid CNN-LSTM + Attention | ~3-5 minutes | 87.01% |

| Standard CNN-LSTM | ~2-3 minutes | 83.12% |

| 1D CNN | ~3-4 minutes | 50.65% |

| Random Forest | ~30 seconds | 74.03% |

| SVM | ~20 seconds | 54.55% |

Conclusion

This project demonstrates a comprehensive framework for time series classification with reproducible results and automatic visualization. Key takeaways:

- Hybrid architectures work best: CNN-LSTM with attention achieves 87.01% accuracy

- Reproducibility matters: Fixed seeds and environment tracking enable reliable comparisons

- Visualization is essential: Automatic chart generation helps understand model behavior

- Traditional ML has value: Random Forest provides good performance with minimal training time

The complete code is available at https://github.com/WHULai/Time-Series-Classifier, building upon the original work from Hybrid CNN-LSTM with Spatial Attention.